Optimization

10 items tagged

(2022).

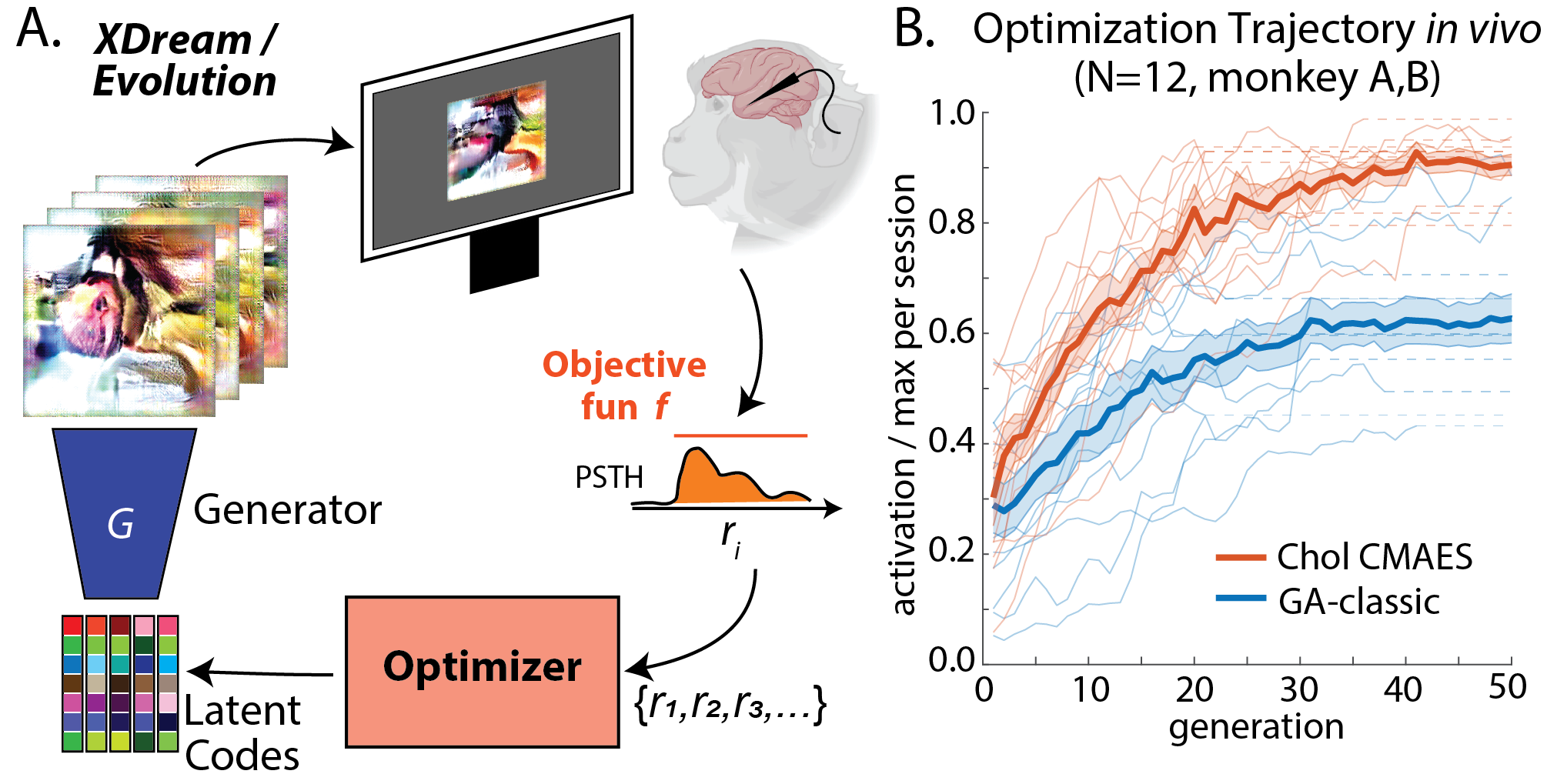

High-performance Evolutionary Algorithms for Online Neuron Control.

GECCO ‘22: Proceedings of the Genetic and Evolutionary Computation Conference (Best paper nomination).

(2021).

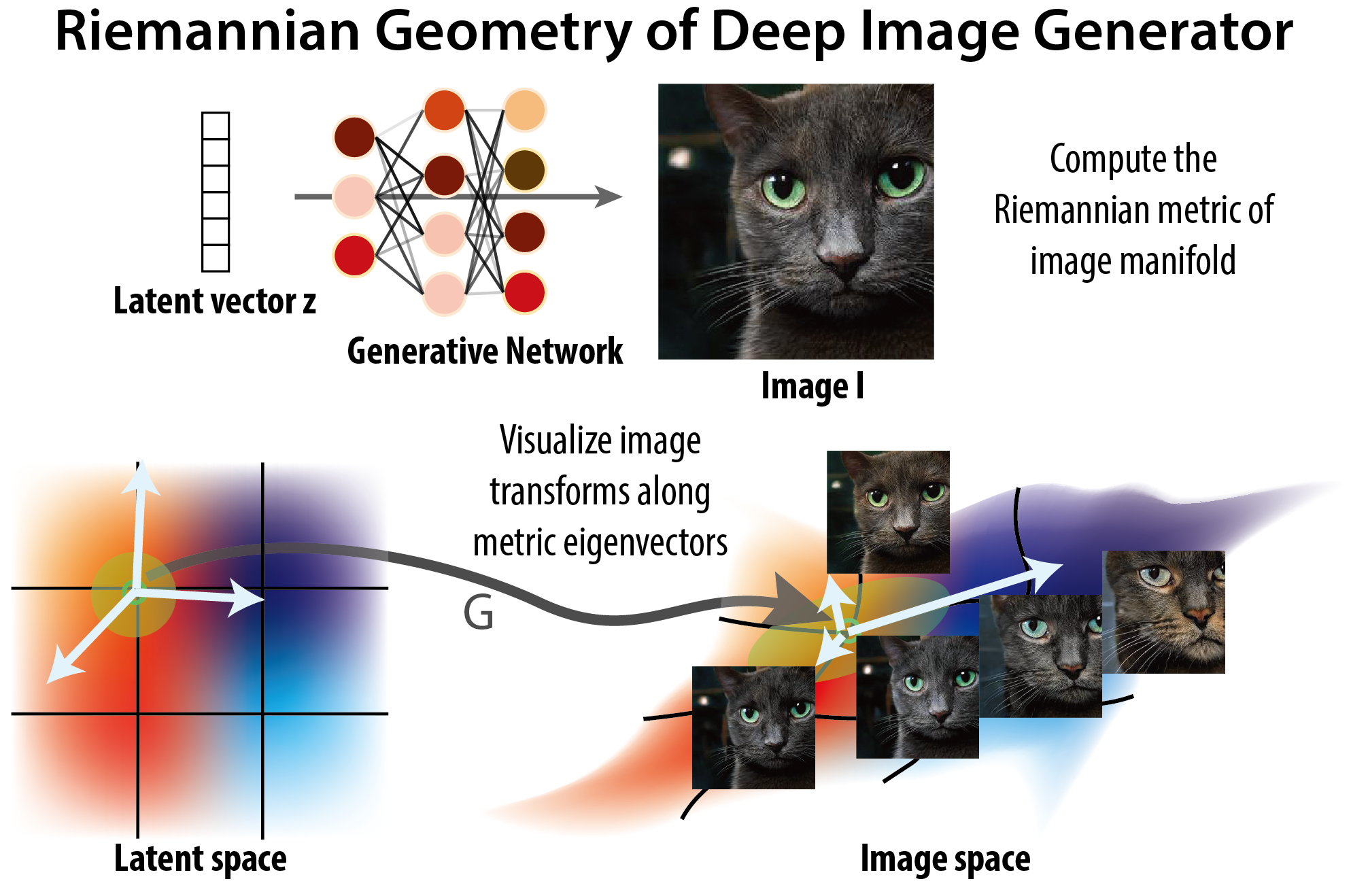

The Geometry of Deep Generative Image Models and its Applications.

International Conference on Learning Representations, 2021.